High-Welfare Equilibrium Selection via Behavior Regularization

Accepted at AAMAS GAIW 2025 and ICML 2025 (Poster)

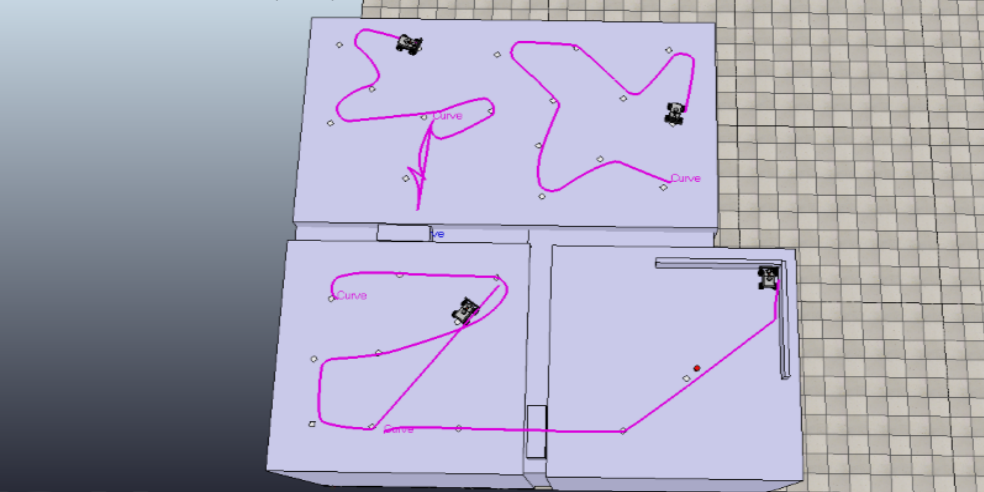

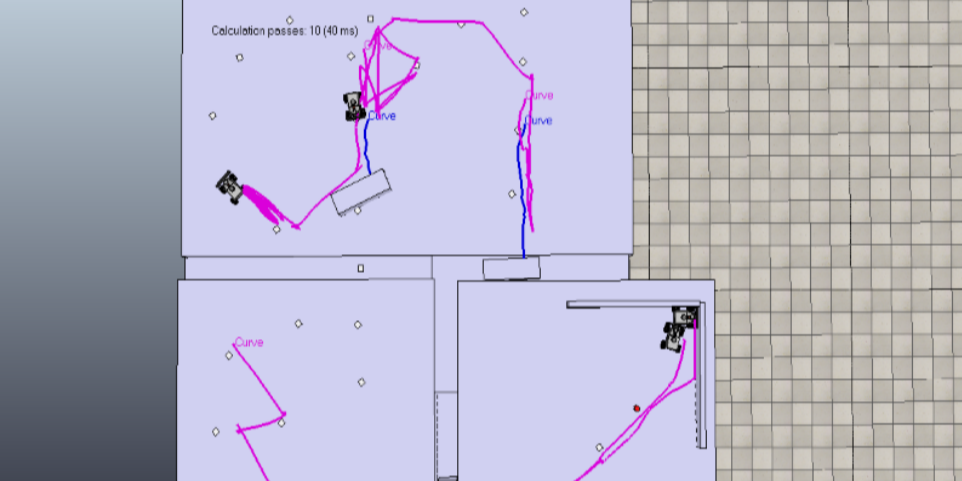

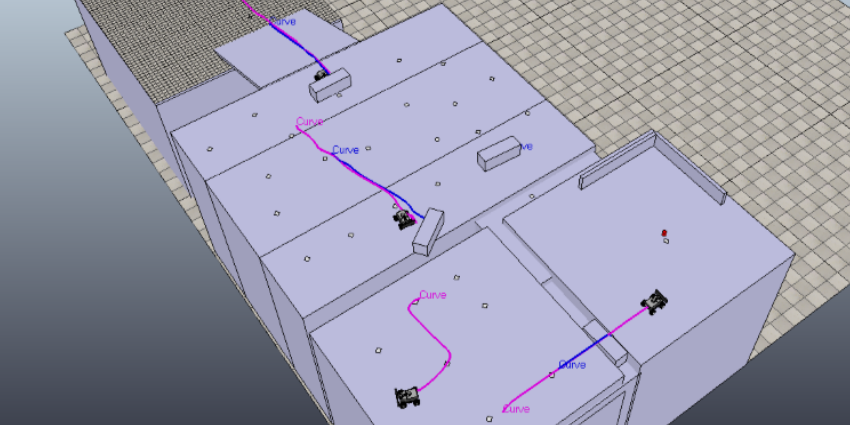

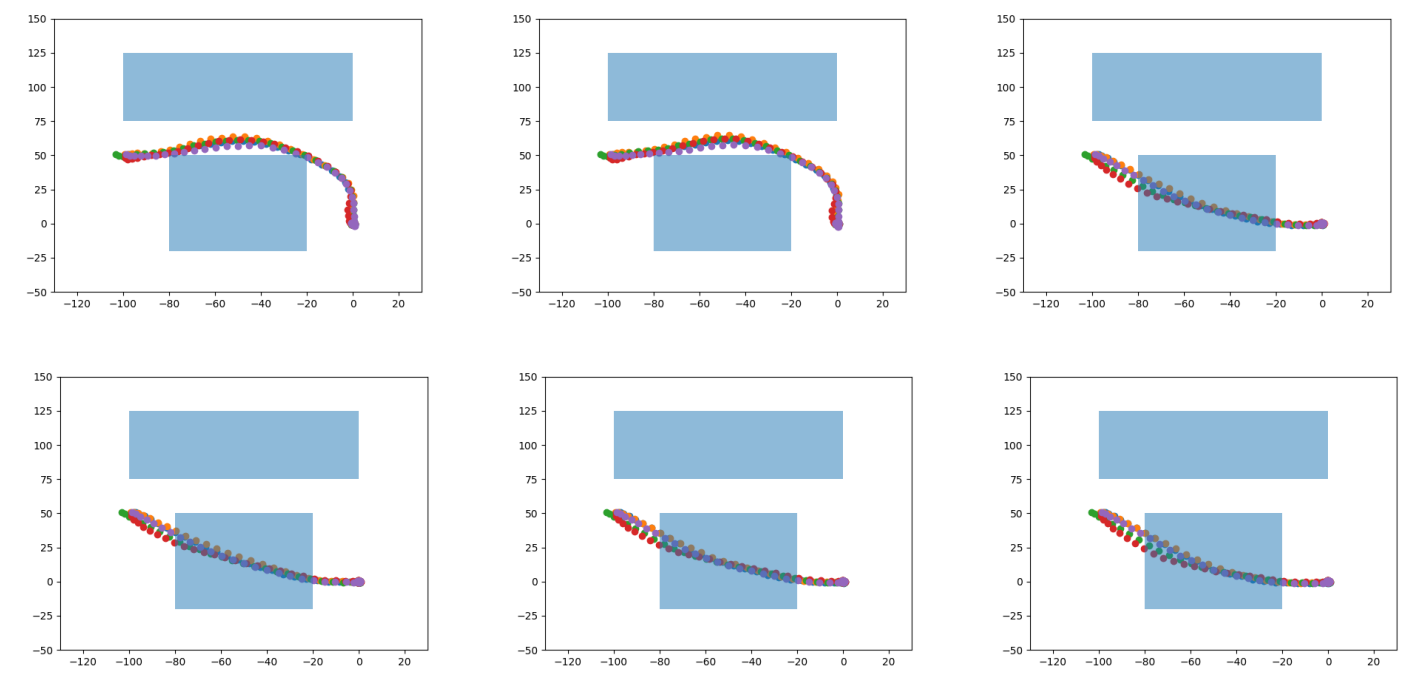

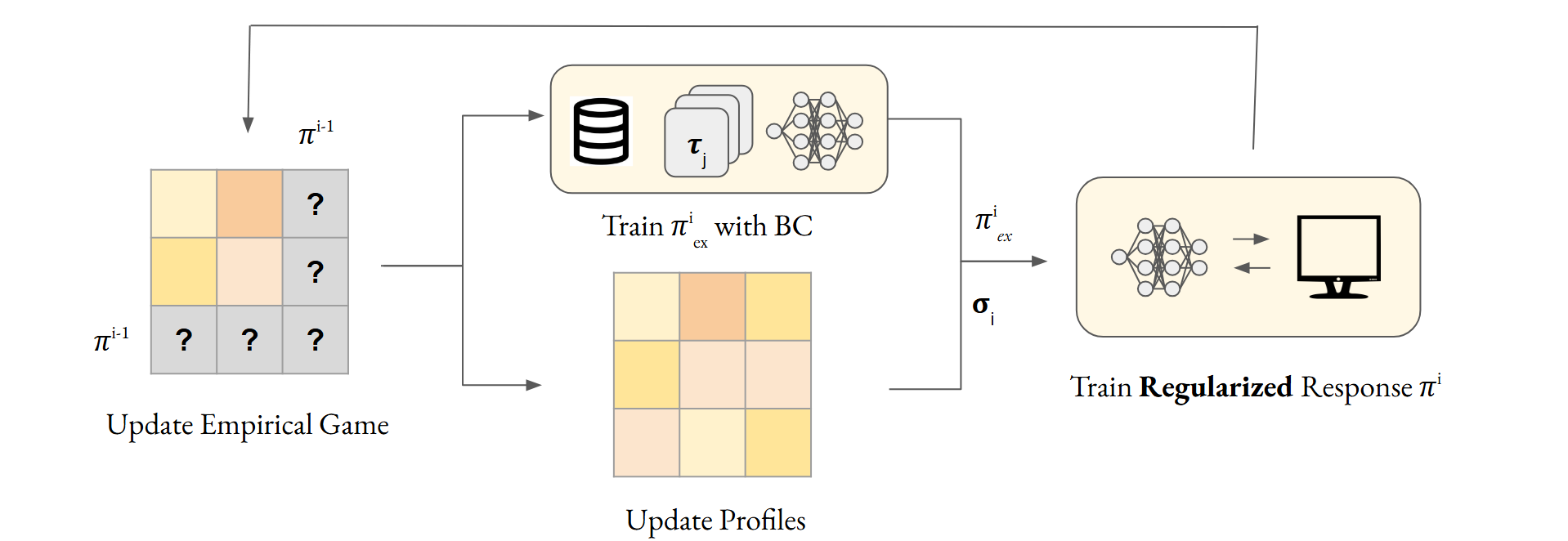

This project extends PSRO to skew strategy exploration towards high-welfare equilibria. Drawing inspiration from behavior regularization in offline RL, Ex2PSRO (Espresso) skews best-responses towards behavior described by a dataset of trajectories gathered during online exploration.